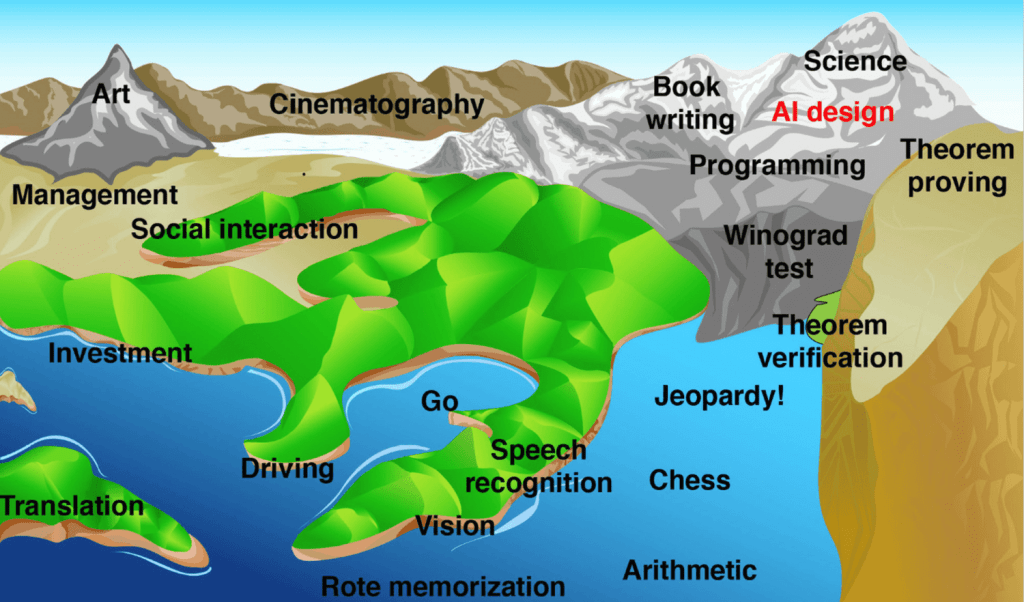

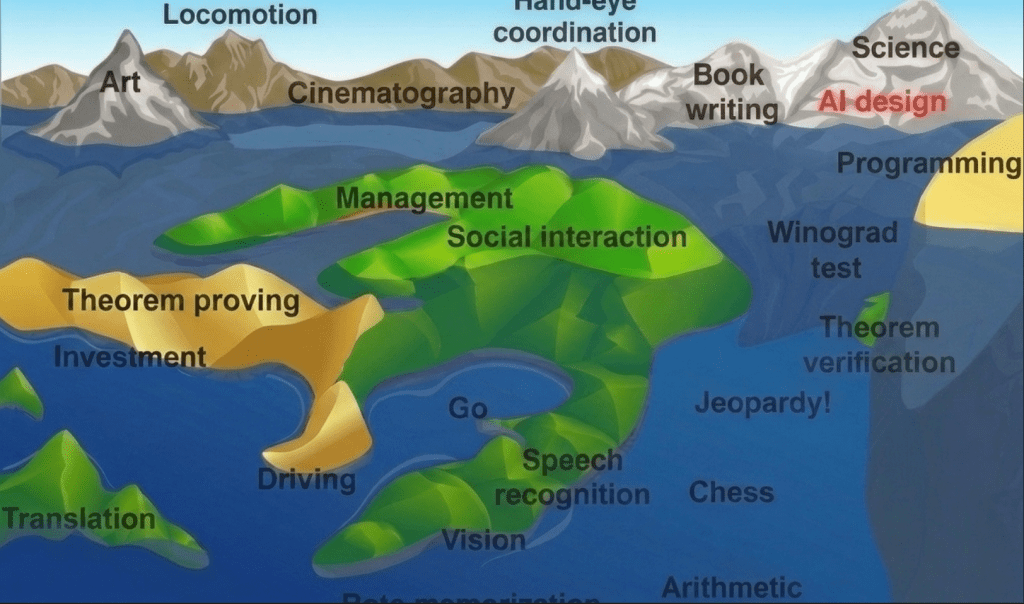

In 1997 Computer Science legend Hans Moravec wrote1:

Imagine a “landscape of human competence,” having lowlands with labels like “arithmetic” and “rote memorization”, foothills like “theorem proving” and “chess playing,” and high mountain peaks labeled “locomotion,” “hand−eye coordination” and “social interaction.” We all live in the solid mountaintops, but it takes great effort to reach the rest of the terrain, and only a few of us work each patch. Advancing computer performance is like water slowly flooding the landscape. A half century ago it began to drown the lowlands, driving out human calculators and record clerks, but leaving most of us dry. Now the flood has reached the foothills, and our outposts there are contemplating retreat. We feel safe on our peaks, but, at the present rate, those too will be submerged within another half century.

20 years later physicist Max Tegmark visualized “Moravec’s flood” in his lovely 2017 book, Life 3.0:2

“During the decades since he wrote those passages, the sea level has kept rising relentlessly, as he predicted, like global warming on steroids, and some of his foothills (including chess) have long since been submerged. What comes next and what we should do about it is the topic of the rest of this book. As the sea level keeps rising, it may one day reach a tipping point, triggering dramatic change. This critical sea level is the one corresponding to machines becoming able to perform AI design. Before this tipping point is reached, the sea-level rise is caused by humans improving machines; afterward, the rise can be driven by machines improving machines, potentially much faster than humans could have done, rapidly submerging all land. This is the fascinating and controversial idea of the singularity…”

In 2023 when I worked at OSTP, I used to keep a copy of this image on my door, periodically hand-coloring updates to the waterline as new capabilities emerged.

In the first quarter of 2026, AI can’t quite yet “zero shot” researching the current state of AI capabilities, update the image accordingly, and drafting this post, but it is remarkably capable of crafting each element piecemeal (with coaxing). I’d guess before the year is out, it will be able to execute this task end to end.

With some liberties taken from both human and AI laziness, here is a quick and dirty update to the graphic and data determining the waterline.

| # | Feature | Status | Source |

|---|---|---|---|

| 01 | Arithmetic | Deep submerged | AIME 2025: Gemini 3 Pro 100% |

| 02 | Rote memorization | Submerged Partially wet on max-context multi-hop | Long-Context Leaderboard (MRCR/RULER) |

| 03 | Chess | Deep submerged | Stockfish 18 Elo ~3650 vs Carlsen 2840 |

| 04 | Go | Deep submerged Adversarial residual | KataGo Distributed Training |

| 05 | Jeopardy-class trivia | Deep submerged HLE at waterline | HLE: Gemini 3.1 Pro 44.7% |

| 06 | Speech recognition | Deep submerged English clean | MLPerf Inference v5.1 (Whisper) |

| 07 | Vision | Submerged MMMU-Pro at human-expert ceiling | MMMU-Pro: Gemini 3.1 Pro 88.21% |

| 08 | Translation | SubmergedWaterline High-resource submerged; literary/classical at waterline | WMT25 en-zh: Algharb/Qwen3-14B ESA 88.4 · PoetMT (classical Chinese) |

| 09 | Winograd test | Submerged Partially wet on adversarial paraphrase | HuggingFace Eval Guidebook 2025 |

| 10 | Investment | SubmergedDry Algorithmic submerged; discretionary tier dry — no benchmark covers it | Alpha Arena S1: Qwen3-Max +22.32% · StockBench: Kimi-K2 #1 DJIA · FinTrust: all LLMs fail fiduciary |

| 11 | Driving | SubmergedDry Geofenced submerged; unstructured/weather dry | Waymo Safety Impact Hub |

| 12 | Theorem verification | SubmergedWaterline Textbook submerged; research autoformalization at waterline | miniF2F-Lean Revisited: HILBERT 99.2% |

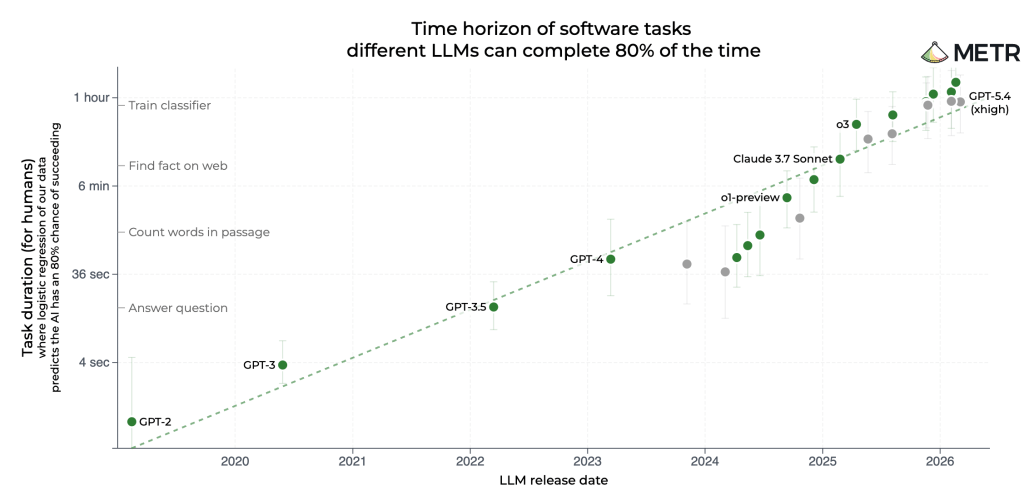

| 13 | Programming | SubmergedWaterline Verified submerged; Pro at waterline | SWE-Bench Pro: Claude Opus 4.5 45.9% |

| 14 | Art | Submerged Technically submerged; residual is socio-legal | Market signal: Fiverr “AI-first” / illustrator income collapse |

| 15 | Book writing | SubmergedDry Short-form submerged; novel-length dry | EQ-Bench Creative Writing v3 + Longform |

| 16 | Cinematography | SubmergedDry Short-clip submerged; feature-length dry | Runway AI Film Festival 2026 |

| 17 | Management | SubmergedDry Deliverables submerged; long-horizon coherence dry | GDPval v2: GPT-5.2 Pro 74.1% wins-or-ties |

| 18 | Theorem proving | SubmergedWaterlineDry Olympiad submerged; research at waterline; Tier 4 dry | IMO 2025: Gemini Deep Think gold (35/42) · FrontierMath Tier 4: GPT-5.4 Pro 38% |

| 19 | Science | Waterline | FutureHouse Robin (end-to-end discovery) |

| 20 | Social interaction | SubmergedDry Routine submerged; high-stakes dry | Capability: EQ-Bench 3 · Counter-signal: Klarna reversal |

| 21 | AI design Red peak | Partially wet ~70% submerged at peak | METR Time Horizon 1.1 · OpenAI Preparedness Framework v2 |

| 22a | Locomotion (unstructured) Moravec | Dry Lower slopes wet | Tien Kung 3.0 wins Beijing Robot Warrior Challenge · HumanoidBench · Musk: zero Optimus do useful work |

| 22b | Dexterous manipulation Moravec | WaterlineDry Structured pick-and-place at waterline; deformables/assembly dry | π*0.6: ~97% adversarial shirt-folding · GarmentLab · FMB peg-in-hole: ~60% |

| 22c | Tacit social judgment Moravec | Dry Mostly dry on peak; extrapolation unreliable | FANToM: GPT-4o vs human 87.5 · ExploreToM: GPT-4o ~9% · SimpleToM: 95% explicit / 15% applied |

With no sign of the underlying trend abating, I expect several more submergences before the year ends.

Moravec’s proposal, “that we build Arks as that day nears, and adopt a seafaring life! ” seems increasingly sage…